Assessment alternatives 1: using questions instead of criteria

Posted on 07-06-2015

In many blog posts over the last couple of years, I’ve talked about the problems with prose descriptors such as national curriculum levels and grade descriptors. It’s often said that national curriculum levels and the like give us a shared language: actually, as I argue here, they create the illusion of a shared language. I’ve also suggested two possible alternatives: criteria must be defined not through prose but through (1) questions and (2) pupil work. In this post and the next, I’ll expand a bit more on what I mean by these.

Defining criteria through questions

As Dylan Wiliam shows in this pamphlet, even a tightly defined criterion like ‘can compare two fractions to decide which is bigger’ can be interpreted in very different ways. If the two fractions are 3/7 and 5/7, 90% of pupils answer it correctly; if they are 5/7 and 5/9, only 15% do. In my experience, criteria such as ‘understand what a verb is’ will be met by nearly all pupils if defined as the following question.

Which of the following words can be used as a verb?

a) run

b) tree

c) car

d) person

e) apple

However, let’s imagine the question is the following:

In which sentences is ‘cook’ a verb?

a) I cook a meal.

b) He is a good cook.

c) The cook prepared a nice meal.

d) Every morning, they cook breakfast.

e) That restaurant has a great cook.

In this case, the percentage getting it right is much, much smaller. The problem when you rely solely on criteria is that some people are defining the criteria as the former, whereas others define it as the latter. And in some cases, criteria may be defined in even more unreliable ways than the above questions.

So, here’s the principle: wherever possible, define criteria through questions, through groups of questions and through question banks. If you must have criteria, have the question bank sitting behind each criterion. Instead of having teachers making a judgment about whether a pupil has met each criterion, have pupils answer questions instead. This is far more accurate, and also provides clarity for lesson and unit planning.

Writing questions can be burdensome, but you can share the burden, and once a question is written, you can reuse it, whereas judgments obviously have to be made individually. If you don’t have much technology, you can record results in an old-fashioned paper mark book or on a simple Excel spreadsheet. If you have access to more technology, then you can store questions on a computer database, get pupils to take them on computer and have them automatically marked for you, which is a huge timesaver. Imagine if all the criteria on the national curriculum were underpinned by digital question banks of hundreds, or even thousands of questions, and if each question came with statistics about how well pupils did on it. It would have great benefits not just for the accuracy of assessment, but also for improving teaching and learning. These questions don’t have to be organised into formal tests and graded – in fact, I would argue they shouldn’t be. The main aim of them is to get reliable information on what a pupil can and cannot do. As Bodil Isaksen shows here, the type of data you get from this sort of system is really useful, as opposed to what she calls the ‘junk data’ you get from criteria-judgments.

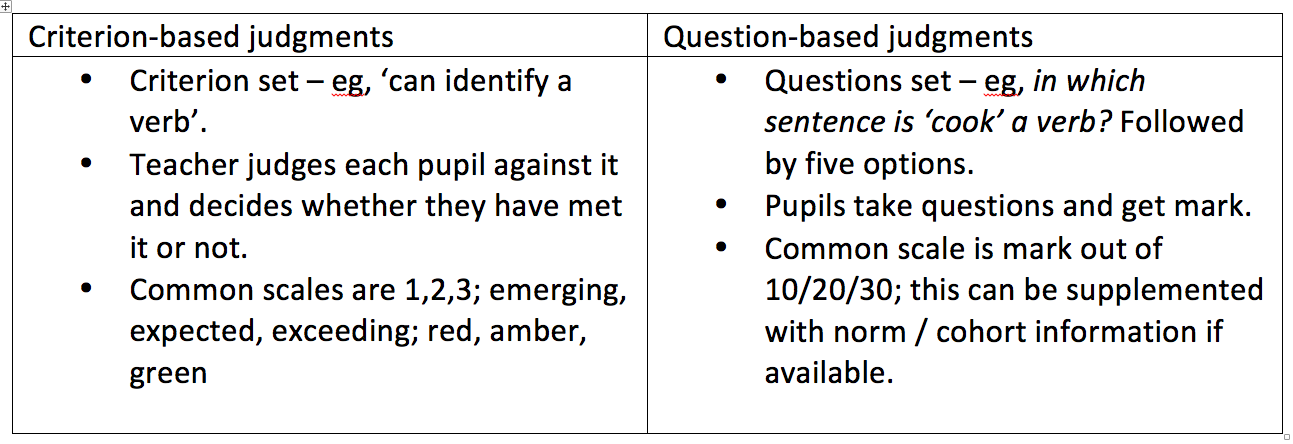

Below is a comparison between the two options. Of course, strictly speaking, question-based judgments don’t entail abolishing criteria. You can still have criteria, but they have to be underpinned by the questions. The crucial difference between the two options is not the existence or otherwise of criteria, but the evidence each option produces.

What about essays?

What about subjects where the criteria can’t be defined through questions? For example, we might set an essay question for English, but clearly, the question here is not the same as the above maths and grammar questions. With closed questions like the maths and grammar ones above, most of the effort and the judgment in those goes before the fact, in the creation of the question. In the case of open questions like essays, the effort and judgment mostly comes after the fact, in the marking of the question. So here, what is important is not just defining the question, but defining the response. That will be the subject of my next post.